There’s a particular emotion that I felt a lot over 2019, much more than any other year. I expect it to continue in future years. That emotion is what I’m calling “algorithmic horror”.

It’s confusion at a targeted ad on Twitter for a product you were just talking about.

It’s seeing a “recommended friend” on facebook, but who you haven’t seen in years and don’t have any contact with.

It’s skimming a tumblr post with a banal take and not really registering it, and then realizing it was written by a bot.

It’s those baffling Lovecraftian kid’s videos on Youtube.

It’s a disturbing image from ArtBreeder, dreamed up by a computer.

I see this as an outgrowth of ancient, evolution-calibrated emotions. Back in the day, our lives depended on quick recognition of the signs of other animals – predator, prey, or other humans. There’s a moment I remember from animal tracking where disparate details of the environment suddenly align – the way the twigs are snapped and the impressions in the dirt suddenly resolve themselves into the idea of deer.

In the built environment of today, we know that most objects are built by human hands. Still, it can be surprising to walk in an apparently remote natural environment and find a trail or structure, evidence that someone has come this way before you. Skeptic author Michael Shermer calls this “agenticity”, the human bias towards seeing intention and agency in all sorts of patterns.

Or, as argumate puts it:

the trouble is humans are literally structured to find “a wizard did it” a more plausible explanation than things just happening by accident for no reason.

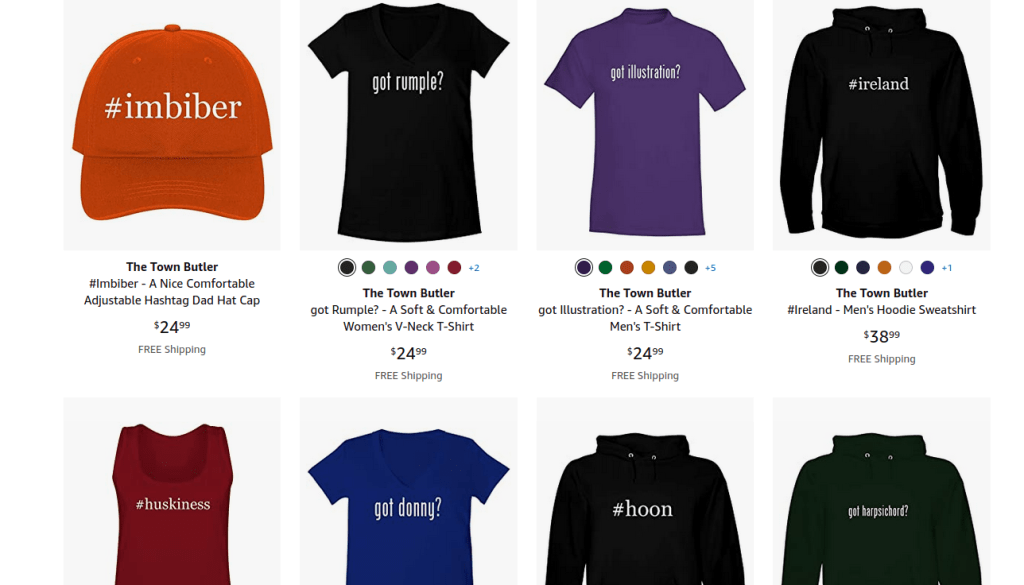

I see algorithmic horror as an extension of this, built objects masquerading as human-generated. I looked up oarfish merchandise on Amazon, to see if I could buy anything commemorating the world’s best fish, and found this hat.

If you look at the seller’s listing, you can confirm that all of their products are like this.

It’s a bit incredible. Presumably, no #oarfish hat has ever existed. No human ever created an #oarfish hat or decided that somebody would like to buy them. Possibly, nobody had ever even viewed the #oarfish hat listing until I stumbled onto it.

In a sense this is just an outgrowth of custom-printing services that have been around for decades, but… it’s weird, right? It’s a weird ecosystem.

But human involvement can be even worse. All of those weird Youtube kid’s videos were made by real people. Many of them are acted out by real people. But they were certainly done to market to children, on Youtube, and named and designed in order to fit into a thoughtless algorithm. You can’t tell me that an adult human was ever like “you know what a good artistic work would be?” and then made “Learn Colors Game with Disney Frozen, PJ Masks Paw Patrol Mystery – Spin the Wheel Get Slimed” without financial incentives created by an automated program.

Everything discussed so far is relatively inconsequential, foreshadowing rather than the shade itself. But algorithms are still affecting the world and harming people now – setting racially-biased bail in Kentucky, potentially-biased hiring decisions, facilitating companies recording what goes on in your home, even career Youtubers forced to scramble and pivot as their videos become more or less recommended.

To be clear, algorithms also do a great deal of good – increasing convenience and efficiency, decreasing resource consumption, probably saving lives a well. I don’t mean to write this to say “algorithms are all-around bad”, or even “algorithms are net bad”. Sometimes it’s solely with good intentions, but it still sounds incredibly creepy, like how Facebook is judging how suicidal all of its users are.

This is an elegant instance of Goodhart’s Law. Goodhart’s Law says that if you want a certain result and issue rewards for a metric related to the result, you’ll start getting optimization for the metric rather than the result.

The Youtube algorithm – and other algorithms across the web – are created to connect people with content (in order to sell to advertisers, etc.) Producers of content want to attract as much attention as possible to sell their products.

But the algorithms just aren’t good enough to perfectly offer people the online content they want. They’re simplified, relies on keywords, can be duped, etcetera. And everyone knows that potential customers aren’t going to trawl through the hundreds of pages of online content themselves for the best “novelty mug” or “kid’s video”. So a lot of content exists, and decisions are made, that fulfill the algorithm’s criteria rather than our own.

In a sense, when we look at the semi-coherent output of algorithms, we’re looking into the uncanny valley between the algorithm’s values and our own.

We live in strange times. Good luck to us all for 2020.

Aside from its numerous forays into real life, algorithmic horror has also been at the center of some stellar fiction. See:

- cripes does anyone remember Google People [short]

- The Gig Economy [long]

Interesting notion. I’ll comment more later when I’ve time, but quickly wanted to flag that this notion reminded me of John Danaher’s idea of “techno-superstition” in the face of opague but omnipresent algorithmic decisions https://philosophicaldisquisitions.blogspot.com/2019/10/escaping-skinners-box-ai-and-new-era-of.html?m=1 your idea of algorithmic horror is distinct, but feels like a counterpart somehow

LikeLike

>We live in strange times. Good luck to us all for 2020.

I’m reading this now in 2021 and… well, we do live in strange times.

I’ve had the thing where I talked to someone about something and they almost immediately saw an ad for it. Several times. I think it mus be chance plus some general targeting based on age groups and interests, but still, it feels creepy.

LikeLike