This is the fifth post in a sequence of blog posts on state biological weapons programs. (“A taboo” was originally going to be the second-to-last post, but has been switched with “The shadow of nuclear weapons”. The index has been rearranged in past posts accordingly.) Others will be linked here as they come out:

1.0 Introduction

2.1 The empty sky: A history of state biological weapons programs

2.2 The empty sky: How close did we get to BW usage?

3.1 Filters: Hard and soft skills

3.2 Filters: A taboo

3.3 Filters: The shadow of nuclear weapons

4.0 Conclusion: Open questions and the future of state BW

In 3.1: Hard and soft skills, we discussed the possibility that the Germy Paradox exists because bioweapons aren’t actually easy to make. Today, we go into the past and discuss another possibility – that whether or not they’re effective, there’s some kind of taboo or cultural reason they aren’t used.

This is not a new idea, although there’s no real consensus. I separate scholarly explanations for the Taboo Filter into two schools: the humaneness hypothesis and the treachery hypothesis. In the humaneness hypothesis, people reject BW because they are unnecessarily cruel. The treachery hypothesis asserts that the taboo is an outgrowth of the ancient beliefs about poison and disease in warfare – that they are secretive, unfair, inexplicable, and fundamentally evil. This hypothesis has many facets and intermingles with evolutionary revulsion to poison and contamination.

But first, how do weapons taboos break?

The reason this filter explanation is particularly interesting is that taboos exist at the whim of the culture, and don’t have particular concrete reasons for existence. If we are protected by a taboo against BW usage, how resilient is that protection?

Trying to assess the strength of a taboo is difficult. We cannot reliably predict the future or the vicissitudes of future policy decisions, particularly when it comes to rare events like BW development or usage.

This is especially true when we are not even certain of the origin of the taboo. But we can perhaps compare it to another event: the chemical weapons taboo. Chemical and biological weapons are, while not terribly similar, often treated similarly in policy (Smith 2014), and are often seen lumped under the categories of “biochemical weapons”, “CBW” (Chemical and Biological Weapons), “CBRN” (chemical, biological, radiological and nuclear weapons), or “WMD” (weapons of mass destruction), so it is appropriate to compare policy decisions.

Chemical weapons have been used much more frequently than BW, and hence, the taboo has been broken on multiple occasions. The Hague Convention was broken by the use of poison gas in WW1; and the Geneva Protocol was broken by chemical and biological weapons use by, among others, Japan, Iraq, and Syria. (Jefferson 2014) Weapon usage is subject to “popularity”, and the up to 161 usages of chemical weapons in Syria have probably built upon each other and reduced psychological and political barriers to future attacks.(Revill 2017) This taboo may already be on unsteady ground.

More directly, the USSR created the largest biological weapons program of all time after signing the Biological Weapons Convention treaty, and Iraq operated an enormous program secretly until 1991. (Wheelis and Rózsa, 2009) In addition to a handful of bioterror efforts in modern times, several states are currently suspected of having biological weapons programs. (A list and sources can be found in Aftergood, 2000.) The BW taboo, as well, may not be as resilient as it appears. That said, it seems likely that an outright declaration or usage of biological weapons by a state would both provoke a stronger response, and erode the taboo severely. The likelihood of this last possibility is what seems most concerning. However, to understand it, we must understand what factors are behind the apparent taboo today.

Humaneness

The first school of thought I consider holds that a taboo exists because biological and chemical weapons are seen as unacceptably inhumane compared to conventional weapons. This seems to have been the motivation of one of the earliest modern explicit taboos against chemical and biological weapons usage, the 1863 Lieber Code (Jefferson 2014). This set of guidelines for humane warfare in the US Army included the following:

Article 16: “Military necessity does not admit of cruelty – that is, the infliction of suffering for the sake of suffering or for revenge, nor of maiming or wounding except in fight, nor of torture to extort confessions. It does not admit of the use of poison in any way, nor of the wanton devastation of a district. It admits of deception, but disclaims acts of perfidy; and, in general, military necessity does not include any act of hostility which makes the return to peace unnecessarily difficult.”

Article 70: “The use of poison in any manner, be it to poison wells, or food, or arms, is wholly excluded from modern warfare. He that uses it puts himself out of the pale of the law and usages of war.”

Leiber, 1863

This code influenced other guidelines for warfare, leading to the forbidding of use of chemical and bacteriological weapons in the Hague Conventions and later the Geneva Protocol.

As a principle, the humaneness taboo relies implicitly on at least one of two assumptions – first, that death or injury from BW is worse than the same from conventional weapons. Second, that BW are more likely to be used against civilian populations, or otherwise inflicting harm on people who would not be affected by conventional weapons. It is easy to imagine that the days-long struggle of a lethal case of smallpox is less humane than an instantaneous death from a bomb, or that biological weapons are frequently targeted at civilians. But these assumptions should be assessed.

There is some evidence that soldiers affected by chemical weapons during World War 1 had higher rates of post-traumatic stress disorder (Jefferson 2014), and diseases are obviously capable of causing protracted suffering. Still, objections have been raised to the notion that biological weapons harm targets more than conventional weapons. Early US responses to the Lieber Code, quoted above, point out that effects from “poison” weapons are not necessarily worse than sinking ships and causing enemy soldiers to drown. (Zander 2003) BW may even be better – many pathogens give infected enemies “a fighting chance” to recover completely, rather than a kinetic attack that maims or kills outright. (Rappert 2003) A minority objection is that since war-makers are motivated to end wars quickly, it may be inhumane to ban any kind of battlefield weapon, on the grounds that removing a country’s best strategy will cause the war to go on longer, and thus extend the suffering that goes along with it. (Rappert 2003) These utilitarian frameworks are important to consider, although when understanding the route of norms, it is important to note that the actual tradeoffs involved are less important than the perception of what the tradeoffs are. Either way, it seems as though neither academia nor military decision-makers have considered these points in detail when making choices about BW, suggesting that this kind of reasoned analysis is not being done anywhere.

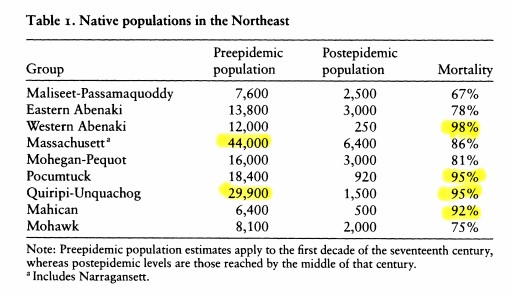

The second assumption that the humaneness hypothesis may rely upon is that biological weapons are more likely than conventional to be used on civilians. There is some evidence for this, in that Japanese BW were extensively used against civilians (Barenblatt 2004) and targets of later BW programs during the Cold War included cities and agriculture. (Wheelis and Rózsa 2009) But the far larger and more influential nuclear war plans during the Cold War included the destruction of cities and billions of civilian lives (Ellsberg 2017), even after both Soviet and American governments agreed to give up their BW programs. Sparing civilian lives in worst-case scenarios cannot have been a military priority.

If the humaneness hypothesis is true, we should expect states to be more comfortable with facing and wielding nonlethal BW. For chemical weapons and perhaps biological weapons as well, this seems to be true. (Pearson 2006, Martin 2016) The American, Soviet, and Japanese BW programs had vast programs to develop agricultural weapons, damaging crops or livestock without affecting humans. (Wheelis and Rózsa 2009) Nonlethal anti-personnel weapons were major component of the American BW program (Alastair 1999) as well as an end goal of the South African BW program. (Wheelis and Rózsa 2009) As far as chemical weapons go, the nonlethality of defoliants is considered to have been a major reason those weapons in particular were used by Kennedy during the Vietnam War (Martin 2016) (although other explanations have been proposed as well, as described later in this piece). It is less clear that revelations of a nonlethal BW program today would provoke less fear than revelations of a lethal program – recent literature has not discussed this question.

Disconcertingly, Susan Pearson suggests that the promise of incapacitating nonlethal BW may inspire development of other BW tactics. (Pearson 2006) While nonlethal weapons were an eventual goal of the South African weapons program (Wheelis and Rózsa 2009), they planned on developing anthrax weapons first, so there is precedent for this claim. Any erosion of this norm may open the door for more overall use of BW, humane or not. (Ilchmann and Revill 2014)

Powerful and invisible: The treachery hypothesis

A separate body of thought holds that biological warfare exists in the public mind in a category that can be described as “treacherous”. This is related to what Jessica Stern calls the “dreaded” nature of BW: it is invisible, unfamiliar, and triggers a disproportionately degree of disgust and fear. (Stern 2003) In explaining this nature, proponents generally refer to the history of revulsion to poison and disease. This would have begun in the evolution of the species, creating an intuitive revulsion of sickness and contamination that kept our ancestors alive. (Cole, 1998)

This trend can be observed in a huge variety of cultures: in Hindu laws of war from 600 AD (Cole 1998), to poison’s association in Christianity with the devil and witchcraft (van Courtland Moon 2008), to South American tribes that allowed warriors to poison their arrows when hunting but not for war. (Cole 1998) Poison and disease are often seen as acceptable tools against subhumans, but not against equals. (Zanders 2003)

It’s important not to overstate it – the taboo is not a human universal. Both the Bible and the Quran contain provisions on how to wage war, but do not forbid poison, disease, or the like as weapons. (Zanders 2003) In Europe, despite official prohibitions beginning in the Renaissance era, their use was defended until 1737. (Price 1995) Nonetheless, the taboo is still notably common in human culture. It is hard to imagine early taboos existing for humanitarian reasons, when conventional warfare before guns and modern medical treatment was so disabling. Instead, it may be because toxic weapons were seen as unbalanced – hard to detect, difficult to explain, and near-impossible to treat.

Proponents of this view rarely address an apparent contradiction this presents – that poison weapons are taboo because they are too powerful. This seems, on its face, absurd. Richard Price addresses this and suggests that despite the conception of poison as a “woman’s weapon” and a treacherous “equalizer” between forces, it is not actually very effective as a weapon. (Price 1995) Similarly, in modern settings, Sonia Ben Ouagrham-Gormley makes a compelling case that the threat of biological weapons programs has been overstated and underwhelming compared to their actual accomplishments. (Ben Ouagrham-Gormley 2014)

Additionally, the persistence of these taboos throughout the modern age has not been fully explained. In the treachery lens, poison and disease are thought of as “the unknown” and often associated with magic. (Price 1995) Today, we understand much more about biology. Does this imply that the taboo is weaker now than it has been? If not, why has the taboo on biological weapons remained constant throughout recent history, but not, for instance, one against bullets, fire, and explosions? This theory does seem generally robust, and the comparisons to historical taboos are compelling indeed, but existing research does not explain why the taboo persists and is so specific to biology.

Taboos into the future

What kills a taboo? Susan Martin discusses the idea in the context of US chemical weapons usage in Vietnam. In this case, Martin argues, politicians were able to override one norm by asserting another – that usage of defoliant chemical weapons was acceptable because the viable alternative was use of nuclear weapons, also taboo. (Martin 2016) Kai Ilchman and James Revill assert that this is part of a string of incidents that have been eroding the biological and chemical weapons taboos over time. (Ilchman and Revill 2014) In addition, many believe that the Biological Weapons Convention is nearly or entirely useless, since it contains no provisions for verification and since it allows for “defensive research” that is practically indistinguishable from offensive research. (Zanders 2003, McCauley and Payne 2010, Koblentz 2016)

The two hypotheses presented here are not the only ones. Further research or thinking in this area might identify more solidly the source of the taboo, and otherwise help determine how it can be strengthened.

The upcoming section will discuss the idea that there is no taboo, or at least no functional taboo any more. If this is the case, then the lack of observed weapons programs or usage is a purely strategic decision.

References

Aftergood, Steven. “States Possessing, Pursuing or Capable of Acquiring Weapons of Mass Destruction.” Federation Of American Scientists, July 29, 2000. https://fas.org.

Barenblatt, Daniel. A plague upon humanity: The secret genocide of axis Japan’s germ warfare operation. New York: HarperCollins, 2004.

Ben Ouagrham-Gormley, Sonia Ben. Barriers to Bioweapons: The Challenges of Expertise and Organization for Weapons Development. Cornell University Press, 2014.

Cole, Leonard A. “The Poison Weapons Taboo: Biology, Culture, and Policy.” Politics and the Life Sciences 17 (September 1, 1998): 119–32. https://doi.org/10.1017/S0730938400012119.

Courtland Moon, John Ellis van. “The Development of the Norm against the Use of Poison: What Literature Tells Us.” Politics and the Life Sciences 27, no. 1 (2008): 55–77.

Ellsberg, Daniel. The Doomsday Machine: Confessions of a Nuclear War Planner. Bloomsbury Publishing USA, 2017.

Hay, Alastair. “Simulants, stimulants and diseases: the evolution of the United States biological warfare programme, 1945–60.” Medicine, Conflict and Survival 15, no. 3 (1999): 198-214.

Ilchmann, Kai, and James Revill. “Chemical and Biological Weapons in the ‘New Wars.’” Science and Engineering Ethics 20, no. 3 (September 1, 2014): 753–67. https://doi.org/10.1007/s11948-013-9479-7.

Jefferson, Catherine. “Origins of the Norm against Chemical Weapons.” International Affairs 90, no. 3 (May 1, 2014): 647–61. https://doi.org/10.1111/1468-2346.12131.

Koblentz, Gregory D. “Quandaries in contemporary biodefense research.” In Biological Threats in the 21st Century: The Politics, People, Science and Historical Roots, pp. 303-328. 2016.

Martin, Susan B. “Norms, Military Utility, and the Use/Non-use of Weapons: The Case of Anti-plant and Irritant Agents in the Vietnam War.” Journal of Strategic Studies 39, no. 3 (2016): 321-364.

McCauley, Phillip M., and Rodger A. Payne. “The Illogic of the Biological Weapons Taboo.” Strategic Studies Quarterly 4, no. 1 (2010): 6-35.

Lieber, Francis. “INSTRUCTIONS FOR THE GOVERNMENT OF ARMIES OF THE UNITED STATES IN THE FIELD, ‘The Lieber Code.’” Government Printing Office, April 24, 1863. Lillian Goldman Law Library, The Avalon Project.

Pearson, Alan. “Incapacitating biochemical weapons: Science, technology, and policy for the 21st century.” Nonproliferation Review 13, no. 2 (2006): 151-188.

Price, Richard. “A Genealogy of the Chemical Weapons Taboo.” International Organization 49, no. 1 (1995): 73–103.

Rappert, Brian. “Coding ethical behaviour: The challenges of biological weapons.” Science and engineering ethics 9, no. 4 (2003): 453-470.

Revill, James. “Past as Prologue? The Risk of Adoption of Chemical and Biological Weapons by Non-State Actors in the EU.” European Journal of Risk Regulation 8, no. 4 (December 2017): 626–42. https://doi.org/10.1017/err.2017.35.

Smith, Frank. American biodefense: How dangerous ideas about biological weapons shape national security. Cornell University Press, 2014.

Stern, Jessica. “Dreaded risks and the control of biological weapons.” International Security 27, no. 3 (2003): 89-123.

Wheelis, Mark, and Lajos Rózsa. Deadly cultures: biological weapons since 1945. Harvard University Press, 2009.

Zanders, Jean Pascal. “International Norms Against Chemical and Biological Warfare: An Ambiguous Legacy.” Journal of Conflict and Security Law 8, no. 2 (October 1, 2003): 391–410. https://doi.org/10.1093/jcsl/8.2.391.